You have all probably heard of the term “Quantum Computing”. In this article I will try to explain what Quantum Computing is and how it might completely change the way conventional computers work.

To understand how quantum computing differs from conventional computing I will first need to explain how conventional computers work. Conventional computers can do two things really well. Store numbers on their hard drives and process these numbers via commands given by the user (or application). Via complex algorithms computers can even teach themselves new things, but that’s a story for another time (Neural networks, Machine learning, Advanced machine learning). To store and process these numbers computers use microscopic switches called transistors which can be as small 10nm, in comparison a red blood cell is roughly 7000nm. A transistor can either be a 1 or a 0 (on or off). If you place these switches together in long strings they can form any number, letter or symbol using binary coding. In order for a computer to do calculations, it uses circuits called logic gates, which are a number of transistors packed together. These logic gates compare patterns of bits stored in registers (temporary memory) and turn them into new patterns. In order for a computer to do large calculations the output of one logic gate is used as input for the next. This all happens at an unfathomable speed. [1]

The problem with how conventional computers work is that in order to process immensely complex problems they need many more of these steps, one after the other. Some problems are so complex that it would take a conventional (super) computer hundreds of years to process; computer scientist call those intractable problems. Even though Moore’s law doesn’t seem to be slowing down anytime soon, there will come a time when transistors can’t possibly be made smaller before becoming as small as atoms. This is why the interest in quantum computing is growing every day. [1]

Quantum computing

The key difference between a conventional computer and a quantum computer is something called a qubit (short for quantum bit). The difference being that a qubit, in comparison to a normal transistor, can store not only a one or a zero but can also store both at the same time (or anything in between). Qubits can represent multiple states at once, this is called a superposition. Think of it like this, when you are playing a guitar chord it sounds like one note but this is actually a combination of different strings that are being struck at the same time. This is the same with superposition of the qubit; it represents multiple numeric values at the same time.

Another key feature of these qubits is that they can process numbers all at once, instead of working in steps one after another. It is only when you try to find out what state a qubit is actually in (for example, by sending it through a filter) it collapses into either a one or a zero. This means that a quantum computer can perform every possible calculation at the same time but you can only measure one result (because when you check a qubit that is in superposition it collapses to either a 1 or 0). In order to see if you have the correct result you will need to do the computation a number of times. But by using some quantum computing tricks like entanglement this can be done exponentially more efficient than what would be possible on a normal computer. Entanglement of qubits means that the state of one qubit is directly related to another qubit, for example they can be negatively correlated (so if the first is a one the other is always a zero) or positively correlated (if the first is a 1 the second is also a 1).[2] A study conducted by Peter W. Shor [3] concluded that for a system of n components, a complete description of its state in classical physics requires only n bits, whereas in quantum physics it requires 2n−1 qubits. This means that a quantum computer will be significantly faster at computing (using Shor’s algorithm) than a conventional computer.

What will the future of quantum computing look like?

At the Quantum Europe 2016 conference some companies actually showed technology based on quantum mechanics. These focused mostly on infrastructure and security. For example, Toshiba showed their Quantum Key Distribution system which allows information to be sent via fibre optic cables using single photons (the particles of light). By using this method the receiver can see if the message was received by anyone else. This secure communication method is called Quantum Cryptography. However according to Sander Hofman, Corporate Communications Manager at ASML, if and when quantum indeed materializes as a viable (cost efficient) technology, it will offer enormous advantages to network infrastructure but it will very likely be complementary to classical computing. [4]

There are some technologies based on quantum physics but it will take at least a decade, according to McKinsey’s Marc de Jong, before something really interesting can be done with the technology. And even then it might only have a niche application (like todays supercomputers). [4]

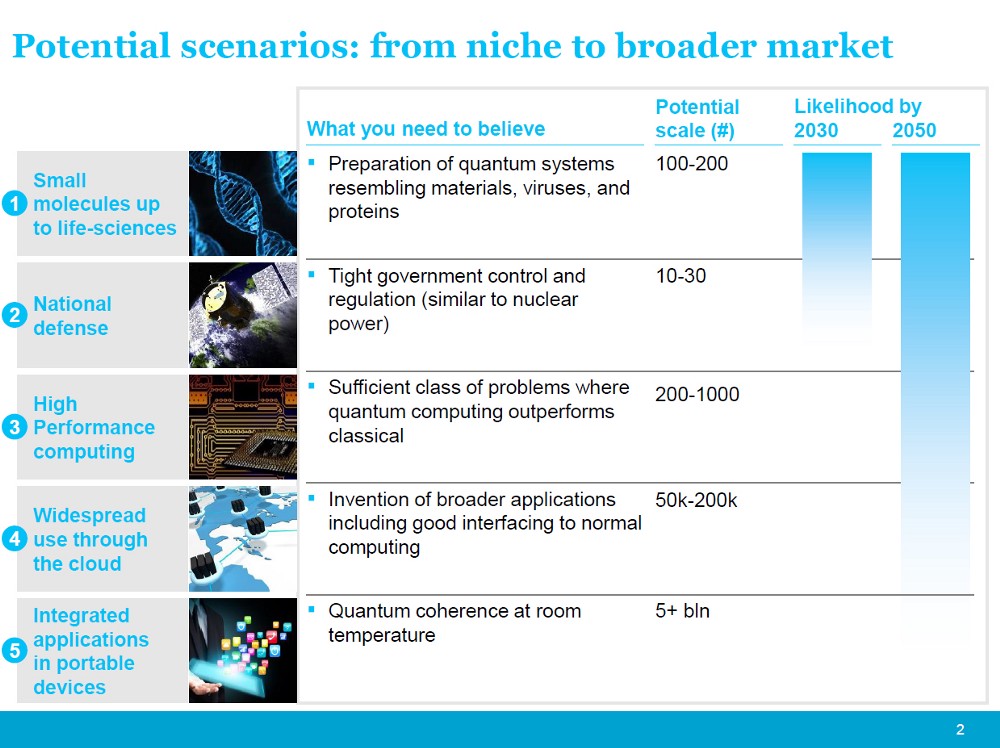

Figure 1 McKinsey’s potential quantum scenarios [5]

The European Union however is betting big and in April 2016 planned a giant one billion-euro quantum technologies project to boost a multitude of quantum technology projects. From secure communication networks to quantum simulators that can help discover new materials or complex proteins to battle diseases. [6]

But what about quantum computing? As you can see in the picture provided by McKinsey a fully working quantum computer that everyone can have at home will probably take more than half a century to be developed. And even if it ever gets there who says that conventional computing would not have evolved to a state where it can battle these quantum computers as well? Another factor that needs to be taken into account is that so far quantum computers are only better than conventional computers at 2 algorithms namely Shor’s algorithm (used for decrypting conventional security keys) and Grover’s algorithm (used for speeding up searches in a large database). In other words, if conventional computer speed increases enough before quantum computers become cost efficient they can still outperform quantum computers in most scenarios. [1]

So in conclusion; more and more research is being done on applications of quantum mechanics but in the near future it will most likely only result in applications for niche markets. It will be a long time before us consumers will see quantum computers in our homes, if the technology ever gets that far.

[1] Woodford, C. (2017, November 1). Quantum computing. Retrieved November 7, 2017, from explainthatstuff.com: http://www.explainthatstuff.com/quantum-computing.html [2] Kurzgesagt – In a Nutshell. (2015, December 8). Quantum Computers Explained – Limits of Human Technology. Retrieved November 7, 2017, from Youtube: https://www.youtube.com/watch?v=JhHMJCUmq28 [3] Shor, P. W. (1999). Polynomial-time algorithms for prime factorization and discrete logarithms on a quantum computer. SIAM review, 41(2), 303-332. [4] Hofman, S. (2016, July 12). Start your engines! The race to quantum computing is on. Retrieved November 7, 2017, from medium.com: https://medium.com/@ASMLcompany/start-your-engines-the-race-to-quantum-computing-is-on-14c3076a5c47 [5] de Jong, M. (2016). Quantum Europe 2016. Amsterdam. [6] Gibney, E. (2016, April 21). Europe plans giant billion-euro quantum technologies project. Retrieved November 7, 2017, from nature.com: http://www.nature.com/news/europe-plans-giant-billion-euro-quantum-technologies-project-1.19796